Azure Automation And SQL DB

February 2, 2016 4 Comments

Authors Note: The version of this script on GitHub was updated in May of 2016 to include the Azure AD tenant ID where the credential exists, as well as the Subscription ID to make sure the proper subscription is selected. This should help situations where there are multiple subscriptions. You will also need to included these variables values when setting up your job schedule.

What a difference two months makes. Two months ago, I helped an old friend out with a customer by working up a sample of using Azure Automation to resize an Azure SQL DB. At the time, importing modules wasn’t as seemless an experience as anyone would have liked. So I intended to blog about it, but simply didn’t get around to it.

Until today! With our recent addition of the Azure Automation module gallery, this has just gotten easier. Given that someone invested in helping build that functionality out. I figured I couldn’t slack off and not write about this any longer. So here we go…

Resizing Azure SQL DB’s

First off we need to address a bit of a misconception. While Azure SQL DB prices are stated in terms of “per month”, the reality is that you are billed “per hour” (see the FAQ). You can adjust both the service tier, performance level, or eDTU’s as often as you want. Within a given hour, the price you pay will be the highest option you exercised during that hour.

This means that if you either have predictable performance needs (highs or lows), or are actively monitoring your database utilization (see my previous blog post), you can tweek your database capacity accordingly.

To take advantage of this, we can use Azure Automation and some PowerShell.

Preparing the Database for resizing

Before I dive into setting up the automation itself, lets discuss a few details. My approach will leverage Azure’s Role Based Access Control (RBAC) features. So manipulating the database will be done by an identity that I’m setting up specifically for Automation and it will have access to the database(s) I want to manipulate.

Presuming you have access as an Administrator to the Azure AD instance that’s associated with your subscription’s management, locate Active Directory in the portal (search or browse) and select it. This should re-direct you to the old portal (at the time of this writing, Azure AD is not currently available in the new portal). Once there, add a new user.

You can use your own identity for this, but I’m much more a fan of setting up “service” identities for these things. If you don’t have administrative access AD in your subscription (not uncommon if its federated with your on-premises domain), then you’ll need to work with whomever manages your Active Directory domain to get the service identity created.

With the identity created, we can now go to the Azure SQL Database server that’s hosting our database, and add the service identity as a user with the “Owner” role.

This grants the service identity to resize (amoung other things), all the databases on this server.

Setting up ourAutomation

Now we have ot set up an Automation Account in our Azure subscription. Its this account that will be the container for all our automation tasks, their assets, and any schedules we have them operate under. In the new Azure portal, click on “+ New”, then select “Management”. Alternatively, you can enter “Automation” into the search box and follow that path.

Give your Automation account a globally unique name, and make sure its associated with the proper subscription, resource group, and region (Automation isn only available in a handful of regions currently).

With the account created, we’ll first add the service identity we created earlier to the Automation account as a Credential asset. A Credential asset will be used by our automation script to execute the commands to resize the database. Other assets include PowerShell Modules, certificates, pre-set variables, etc… This also allows you to have multiple scripts that reuse the same Credential asset without each having to be updated each time the credential changes (say for periodic password changes).

When I first did this a couple months ago, I had to manually add in another PowerShell module. But they’ve started importing more of them by default, so that’s no longer necessary (unless you are tied to specific modules that are not available by default or you need to tag a newer/older version of something that’s already included).

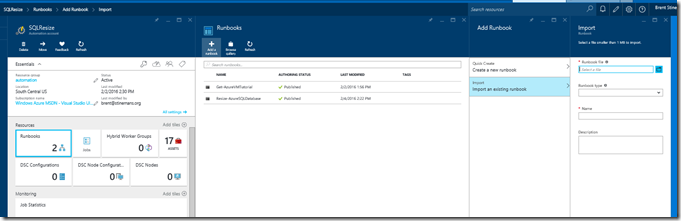

With the credential in place, we can now add the Runbook. I’ve created one for you already, so just download that, and then in the Azure Automation account, import and existing runbook.

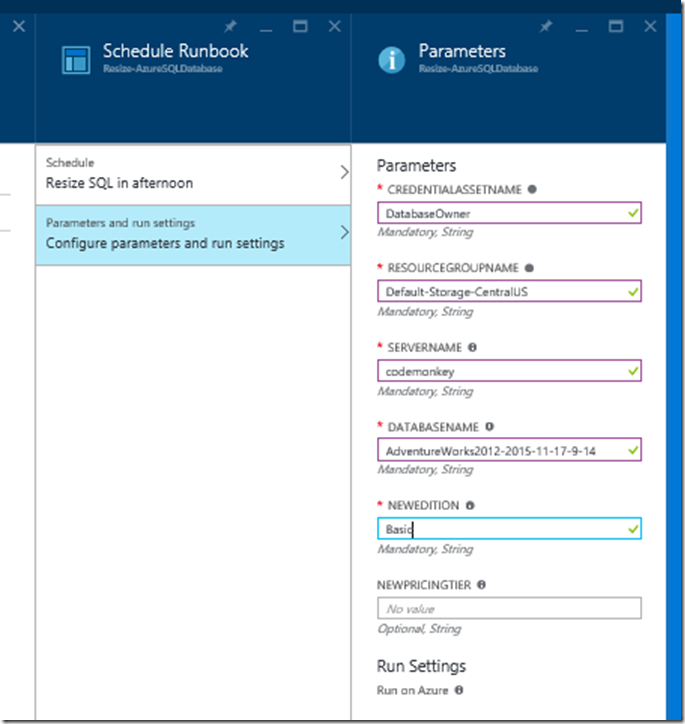

Set the type to “PowerShell Workflow” and give it a meaningful name (one will likely default), and give it a description. The import should only take a few seconds, then you can select the new Runbook. Once in, you can go into “edit” mode to either made changes, or test it. I highly recommend a test run this way first. To execute a test, you’ll provide the parameters called out in the script…

CredentialAssetName – the name we can the Credential Asset we created

ResourceGroupName – My script works on new Resource Manager based Azure SQL DB’s. So we want to know what resource group the database is in.

ServerName – the name of the database server (just the first part, leave out the ‘.database.windows.net’). This will need to be in all lower case.

DatabaseName – the name of the database to be resized

NewEdition – what is the new edition we’re resizing to (Basic, Standard, etc…)

NewPricingTier – what tier are we moving to S1, S2 (leave blank if the Edition is basic)

Fill in each appropriately and “Start” the test. Depending on the size of your database, this could take some time, so go get something to drink, check email, etc…

Done? Good! Hopefully the test ran well, So lets now publish our runbook. Publishing is important because it allows us to edit and test one version of the runbook while another is used by any scheduled occurances.

With the publish complete, our final step is to schedule this run. In my case, I know each afternoon, I want to set the database back down to “Basic”, because I won’t be using it in the evening (its just a dev database). I’ve got the option of creating a new schedule, or associating the job with an existing one. This allows multiple runbooks to be executed on a single schedule entry.

With the schedule set, we just need to set the parameters, much like we did when we tested the script.

Click “Ok” a couple times, and our runbook will be all set to run on our schedule. We can also execute the runbook “on demand” by selecting it in the portal, and clicking on “Start”.

Note: Once created, the portal does not currently let us edit the schedule. You’ll need to remove and readd-it. But you can turn schedules on/off.

Monitoring our Runbook

So each time the RunBook is executed by the schedule this is referred to as a job. Either in the Automation Account, or in the Runbook itself, we can select “jobs” and view any jobs that have recently. This will include both ones that were done “on demand”, or via the scheduler. In this you can look at the output, as well as the logs that were written.

And there we have it!

So what’s next?

For me, next up is lunch. For you, hopefully this will work out of the box for you and help you save a few bucks here and there. I would also recommend you check out some of the scripts in the gallery and think of other interesting uses for Azure Automation.

Until next time!

PS – HUGE thanks to the team that has open sourced Live Writer. Works like a dream!

Huge thanks! I’ve been trying to get this to work all day, based on an older script. But it just didn’t work. This worked like a charm. Saving some huge bucks scaling down during the night.

You’re very welcome. Glad it helped you out.

Do you have a suggestion as to how I can pair this up with a periodically monitoring of DTU usage that will auto-trigger the appropriate scaling of up or down within certain limits?

You could (in theory) create a web job that executes the query and then takes action (such as scaling the database). The trick of course would be ensuring there’s not to much “jitter” in the scaling up and down. I think its likely a better approach to analyze the results over time to be able to predict the patterns and then anticipate when actions need to be taken.